AI referral traffic: sources, conversion rates & GA4 tracking

ChatGPT drives 87.4% of all AI referral traffic and those visitors convert 4.4x higher than organic. Here's what it means for your content strategy in 2026.

ChatGPT drives 87.4% of all AI referral traffic across tracked websites making it the dominant AI search engine by a margin most marketing teams are not accounting for in their content or channel strategy. Not 40%. Not 60%. Eighty-seven percent.

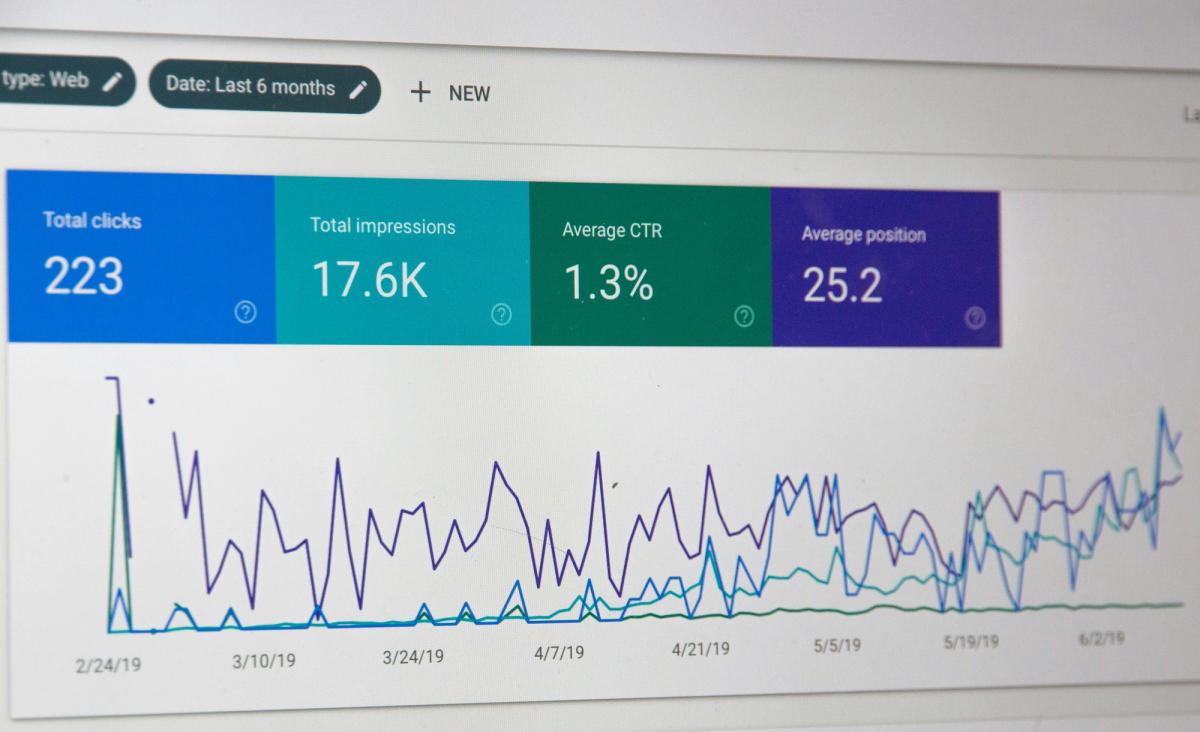

But here is the problem: most teams are still not measuring it correctly. ChatGPT traffic does not always arrive with a clean referral tag. Without the right GA4 setup, the majority of your AI-driven visits are being logged as direct traffic invisible, unattributable, and unactionable.

This post covers three things your team needs right now: what the 87.4% figure actually means, how to set up GA4 to track it properly, and what content changes will increase your share of it. [If you are still unclear on what AI referral traffic is and why it matters, start with our guide to AI search visibility →]

Why the 87.4% number is not what you expect

Before drawing conclusions from the 87.4% figure, it is worth understanding what it measures and what it does not.

The statistic reflects website visits that originate from a click on a citation or link within an AI-generated response. It measures which AI engine is sending people to websites after generating an answer, not which engine is generating the most queries overall.

This matters because the 87.4% figure reflects two combined effects: ChatGPT's query volume advantage and its citation behaviour. An AI engine that generates many answers but rarely includes clickable citations would drive low referral traffic despite high usage. ChatGPT scores highly on both dimensions over 400 million weekly active users and a consistent pattern of including specific, clickable citations in its responses.

The practical implication: a brand's absence from ChatGPT responses is not equivalent to its absence from Perplexity or Gemini responses. Missing from ChatGPT is a fundamentally larger problem one that accounts for the majority of AI-driven traffic your competitors are capturing and you are not.

Related: What is query fan-out and why it determines whether your brand gets cited →

How to track AI referral traffic in GA4 step by step

This is the section most guides skip. Without the right setup, you are flying blind on the channel that converts best.

ChatGPT traffic does not always arrive with a clean referral tag. Depending on how a user accesses ChatGPT desktop app, mobile, embedded browser the referral information may be stripped entirely, appearing as direct traffic in your analytics rather than as a ChatGPT referral.

Step 1: Create a custom channel group in GA4

Go to Admin → Data Display → Channel Groups → Create new channel group.

Name it "AI referral traffic."

Step 2: Add the following regex rule to capture all major AI sources

Apply this rule to the Session source condition.

Step 3: Verify the channel is capturing sessions

Go to Acquisition → Traffic acquisition and filter by your new AI referral channel. You should start seeing sessions attributed here within 24–48 hours.

Step 4: Set up a conversion comparison report

Create a secondary dimension comparing conversion rate by channel. This lets you see in real time how AI-referred visitors are converting versus organic search, paid, and direct which is where the 4.4x conversion differential becomes visible in your own data rather than just as an industry average.

Once this is configured, you have the baseline. Every AI search optimisation decision you make from this point is measurable rather than directional.

Related: Why AI crawlers do not render JavaScript and what it means for whether your content gets cited at all →

The conversion rate that changes the budget conversation

AI-driven visitors convert 4.4 times higher than standard organic search visitors. Lantern's attribution data confirms this pattern AI-referred visitors arrive pre-qualified, having already received a recommendation from an engine they trust. The AI has vouched for the brand. The visitor is not discovering the product for the first time. They are investigating a recommendation they have already received.

The important caveat: the 4.4x figure is a category average across Lantern-tracked domains. More recent data from individual brand datasets shows meaningful variation by industry B2B SaaS tends to see higher conversion lifts from AI referral traffic than e-commerce, where Adobe's Cyber Week data showed strong volume but lower revenue per session than Google organic. Your brand's specific conversion rate by AI engine source may differ significantly from the average.

This is the argument for measuring at the engine level rather than treating AI referral traffic as a single channel. A brand receiving 95% of its AI referral traffic from ChatGPT is highly dependent on a single engine. A brand with more distributed AI traffic ChatGPT plus meaningful Perplexity and Gemini contributions has a more resilient AI search presence.

A channel that converts at 4.4 times the rate of organic search is not supplementary. It is a primary revenue channel that happens to be significantly underinvested in relative to its return.

The conversion paradox why the data seems to contradict itself

You may have seen conflicting reports: some studies show 4.4x conversion lift from AI referral traffic, others show lower revenue per session than Google. Both are correct, and the contradiction is explainable.

The discrepancy comes down to what is being measured. Studies showing higher conversion rates are typically measuring conversion rate the percentage of AI-referred visitors who complete a target action (sign-up, demo request, purchase). Studies showing lower revenue per session are measuring revenue per visit which factors in average order value and session depth, not just whether a conversion occurred.

AI-referred visitors convert more often but sometimes spend less per transaction, particularly in e-commerce. In B2B SaaS, where the target action is a demo request or free trial sign-up rather than a direct purchase, the conversion rate lift is the more relevant metric and it holds up strongly in Lantern's dataset.

The takeaway: measure both. Conversion rate tells you if AI search is working. Revenue per session tells you if the visitors it sends are the right fit.

What ChatGPT cites differently from every other engine

Understanding ChatGPT's 87.4% traffic dominance requires understanding how it selects citations because its behaviour is meaningfully different from Perplexity, Gemini, and Claude in ways that require specific content adaptations.

Lantern's February 2026 citation data reveals a finding most AEO guides have not yet addressed: ChatGPT cites product pages at 20.1% fifty times the rate of Perplexity, which cites product pages at just 0.4%.

This is not a marginal difference. It is a structural divergence with direct consequences for content strategy.

A brand that has invested heavily in blog content and listicles the formats that dominate citation rates on Perplexity, Gemini, and Claude is not fully optimised for ChatGPT. The engine responsible for the overwhelming majority of AI-driven visits is the one most likely to cite a product page directly. The engine most likely to cite a listicle drives a fraction of the actual traffic.

Related: The content format that wins AI citations what Lantern's data shows →

The ChatGPT-specific content checklist

Optimise product pages for citation, not just conversion

Every product page should contain a clear one-paragraph definition of what the product does, who it is for, and what outcome it delivers in the first 150 words, written as a standalone extractable claim rather than marketing copy that requires context to interpret.

Add FAQ schema to every product and feature page

ChatGPT constructs answers to evaluation queries by extracting relevant content from cited sources. FAQ schema pre-formats your answers to the questions "what does X do," "who is X best for," and "how does X compare to Y" in a structure ChatGPT can extract directly.

Publish comparison content targeting specific evaluation contexts

ChatGPT cites comparison content at 10.3%. The comparison queries that trigger ChatGPT citations tend to be specific and evaluation-focused: "Lantern vs Profound for a 10-person B2B SaaS marketing team" rather than "best AEO tools." Build comparison pages that address team size, use case, budget range, and existing tool stack.

Ensure your brand entity is documented across authoritative external sources

ChatGPT's entity recognition draws heavily on how well a brand is documented across G2, Crunchbase, LinkedIn, and other authoritative platforms. A brand that exists as a well-documented entity across multiple independent sources is cited with significantly higher confidence than one that exists primarily on its own domain.

Related: 91% of AI citations ignore your website — here is why →

Build content that answers the specific prompts your buyers use in ChatGPT

ChatGPT users ask longer, more specific, more conversational questions than Google users. A buyer researching your category in ChatGPT is more likely to ask "what is the best AI search visibility platform for a B2B SaaS marketing team that already uses HubSpot and has a budget of around $500 per month" than to type "best AEO tool." Content that addresses these specific, context-rich queries is more likely to be cited.

Related: What is query fan-out — and why it determines whether your content gets picked up →

What the other 12.6% tells you

The 87.4% ChatGPT traffic share does not mean the other AI engines are irrelevant.

Perplexity deserves the most attention among non-ChatGPT engines for a specific reason: its user base skews heavily toward B2B professional and technical audiences researchers, analysts, and technical professionals who are often further along in the buying journey than the average ChatGPT user. A B2B SaaS brand may find that Perplexity's share of its AI referral traffic is higher than the 12.6% category average, and that the visitors it sends convert at particularly high rates.

Gemini's trajectory is also worth watching. Between September and November 2025, Gemini referral traffic grew 388% year over year while ChatGPT's rose 1% over the same period. Gemini is not yet significant in referral volume but the growth rate suggests it will matter more in the second half of 2026.

The 87.4% figure tells you where to prioritise. It does not tell you to ignore the rest.

Related: Most cited domains across ChatGPT, Perplexity, Gemini, and Claude — the pattern →

The three metrics that matter

AI referral traffic by engine. Once your GA4 channel group is configured, you can see exactly what share of your AI referral traffic comes from ChatGPT versus other engines and how that share is changing over time. A brand receiving 95% of its AI referral traffic from ChatGPT is highly dependent on a single engine.

ChatGPT citation rate by prompt type. Knowing that ChatGPT cites you is less useful than knowing which prompt types trigger citations. A brand cited on branded queries ("what is [brand name]") but not on category queries ("what is the best tool for X") has a fundamentally different AI search problem than a brand cited on both.

Conversion rate by AI engine source. The 4.4x conversion rate is a category average. Your brand's specific rates by engine source may vary significantly — and the brands that measure this at the source level make better investment decisions than those working from category averages.

FAQ

How do I track AI referral traffic in Google Analytics 4? Create a custom channel group in GA4 under Admin → Data Display → Channel Groups. Add a regex rule capturing the major AI referrer domains (openai.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com). This separates AI-referred sessions from direct traffic, where they are currently being misattributed.

How much of my website traffic comes from ChatGPT? For most brands, AI referral traffic is currently around 1% of total sessions but growing at roughly 1% per month. Within that AI referral pool, ChatGPT accounts for 87.4% on average. Without the GA4 custom channel group setup above, however, most of this traffic is being attributed to direct, so your current analytics almost certainly understate it.

Do AI referral visitors convert better than organic search visitors? On average, yes AI-referred visitors convert 4.4x higher than standard organic search visitors across Lantern-tracked domains. The mechanism is pre-qualification: the AI has already recommended the brand before the visitor clicks. However, revenue per session varies by industry, particularly in e-commerce where average order values can differ from Google-referred buyers.

What percentage of AI traffic comes from ChatGPT vs Perplexity? Across Lantern-tracked domains, ChatGPT accounts for 87.4% of AI referral traffic. Perplexity, Gemini, Claude, and Copilot collectively account for the remaining 12.6%. Industry variation is significant B2B technology brands tend to see a higher-than-average Perplexity share given its research-oriented user base.

The window this data opens

Most brands in most B2B SaaS categories are not yet optimising specifically for ChatGPT. They are running generic AEO programmes that treat all AI engines equivalently, producing content formats that perform well on Perplexity and Gemini, and measuring AI search performance at a level of aggregation that makes engine-specific optimisation impossible.

The brands that read this data correctly and act on it optimising product pages for ChatGPT's citation preferences, adding FAQ schema, targeting conversational prompt patterns, and measuring citation rates and conversion at the engine level are building a structural advantage in the channel that drives 87.4% of AI referral traffic.

That advantage compounds. Citation authority in ChatGPT builds over time as the model learns which sources consistently provide reliable, specific, extractable answers to evaluation queries. The window is open now.

See how your brand is currently performing in ChatGPT and across all major AI engines. Get your free Lantern visibility report →

Key takeaways

- ChatGPT drives 87.4% of all AI referral traffic optimising equally across all AI engines misallocates the majority of your AI search effort

- AI-referred visitors convert 4.4x higher than standard organic traffic on average making it the highest-converting acquisition channel most brands are underinvesting in

- Without a GA4 custom channel group, most ChatGPT referral traffic is being misattributed to direct the regex setup above fixes this immediately

- ChatGPT cites product pages at 20.1%, fifty times Perplexity's rate product page optimisation is a first-priority ChatGPT citation strategy

- The AI referral conversion paradox is explainable: conversion rate is higher, revenue per session varies by industry and business model

- Perplexity deserves disproportionate attention among non-ChatGPT engines for B2B brands given its research-oriented, high-intent user base

- The three metrics that matter: AI referral traffic by engine, ChatGPT citation rate by prompt type, and conversion rate by AI engine source

Lantern tracks your ChatGPT citation rate, prompt-level visibility, and AI referral traffic attribution across all major engines in one dashboard connected directly to your GA4 account. Start your free trial →